Changing the harness around a fixed LLM can produce a 6x performance gap on the same benchmark. That’s the finding from Stanford and MIT’s Meta Harness paper — and their solution: an agent that reads all prior harness code, scores, and execution traces through a filesystem, then writes a better harness. The result: it beat every human-written harness on TerminalBench-2, used 1/4 the tokens of the previous best, and made a small model (Haiku 4.5) rank #1 in its weight class.

| *Source: arXiv:2603.28052 (Mar 2026) | Project Page | GitHub* |

The Key Insight

Same model + bad harness = 12% accuracy

Same model + good harness = 76% accuracy

↑

6x gap from harness alone

A “harness” is everything wrapping the LLM: prompts, tool calls, context management, retrieval strategy, error handling. Harness engineering matters as much as model selection — yet the community hand-tunes harnesses while spending billions on model training.

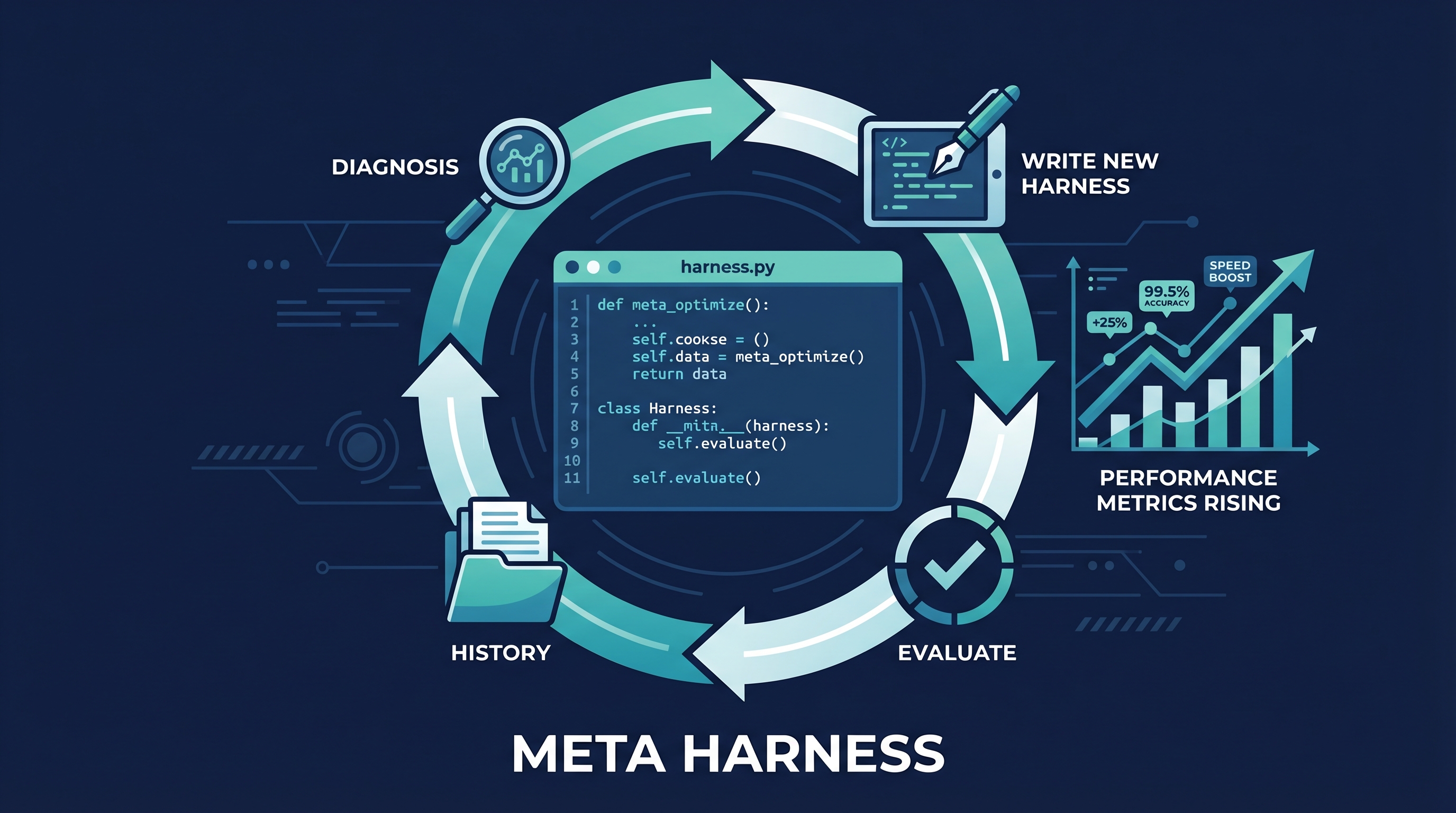

How Meta Harness Works

┌─────────────────────────────────────────────┐

│ META HARNESS LOOP │

│ │

│ ┌─────────────┐ ┌──────────────────┐ │

│ │ Filesystem │ │ Proposer Agent │ │

│ │ │ │ (Claude Code) │ │

│ │ All prior: │───>│ │ │

│ │ - code │ │ grep, cat the │ │

│ │ - scores │ │ full history │ │

│ │ - traces │ │ (10M tokens) │ │

│ │ (uncompressed)│ │ │ │

│ └─────────────┘ │ Counterfactual │ │

│ ↑ │ diagnosis │ │

│ │ └────────┬─────────┘ │

│ │ │ │

│ │ ┌───────v────────┐ │

│ │ │ New Harness │ │

│ │ │ (actual code) │ │

│ │ └───────┬────────┘ │

│ │ │ │

│ │ ┌───────v────────┐ │

│ └───────────│ Evaluate on │ │

│ │ held-out tasks│ │

│ └────────────────┘ │

└─────────────────────────────────────────────┘

Critical design: the proposer gets full, uncompressed access to all prior code and traces via filesystem (10M tokens), not compressed summaries. It uses grep and cat like a human developer reviewing prior work.

Results

| Benchmark | Meta Harness | Previous Best | Improvement |

|---|---|---|---|

| Text Classification (avg) | 48.6% | ACE: 40.9% | +7.7 points |

| Token usage (vs ACE) | 1/4 of ACE | — | 4x cheaper |

| IMO-level math (pass@1) | +4.7 points | BM25 baseline | Transfers across 5 models |

| TerminalBench-2 (Opus 4.6) | 76.4% | All human harnesses | #1 overall |

| TerminalBench-2 (Haiku 4.5) | 37.6% | All Haiku agents | #1 in weight class |

The small model result is the most striking: an optimized harness made a cheap model beat expensive models with hand-written harnesses.

Why This Matters

| Implication | Detail |

|---|---|

| Harness > Model | 6x gap from harness alone. Community under-invests in scaffolding vs. model training. |

| Self-improving infrastructure | AI systems that optimize their own tooling, not just solve end tasks (Karpathy’s autoresearch vision). |

| Small models punch up | Haiku 4.5 + Meta Harness = #1 in weight class. Optimized harness substitutes for larger models. |

| Transferability | IMO math harness discovered with one model improved accuracy across 5 different models. |

| One-time cost | Search uses 10M tokens/iteration, but the resulting harness is cheaper to run than alternatives. |

Authors

| Author | Affiliation |

|---|---|

| Yoonho Lee | Stanford IRIS Lab |

| Roshen Nair | Stanford |

| Qizheng Zhang | Stanford |

| Kangwook Lee | KRAFTON |

| Omar Khattab | MIT |

| Chelsea Finn | Stanford |

How LearnAI Team Could Use This

- Research seminar paper — This is a must-read for anyone studying AI agents. The 6x harness gap finding alone is worth a full class discussion. Directly connects to Q’s harness engineering wiki entries.

- Student research projects — Students can replicate the text classification experiment: write multiple harnesses for the same model, measure the performance gap. Teaches that infrastructure matters.

- Cost optimization — The finding that optimized harnesses make small models competitive means the team can use cheaper models (Haiku) with better scaffolding instead of paying for Opus on every task.

- Program analysis connection — Meta Harness’s “counterfactual diagnosis across execution traces” is essentially program analysis applied to agent behavior. Directly relevant to Q’s research.

- Teaching meta-optimization — The concept of “an agent that improves the agent” is a powerful teaching example for AI systems courses. Where does the optimization loop end?

Real-World Use Cases

- Enterprise harness optimization — Companies running AI agents at scale can use Meta Harness to automatically discover better scaffolding, reducing costs while improving quality.

- Benchmark competitions — Teams competing on TerminalBench, SWE-bench, etc. can use Meta Harness to search the harness space instead of manually tuning prompts.

- Model evaluation — The 6x harness gap means benchmark results without controlling for harness quality are unreliable. Meta Harness provides a way to normalize this.

- AutoML for agents — Just as AutoML optimizes model hyperparameters, Meta Harness optimizes agent infrastructure. The next logical step in the “agents that build agents” trajectory.