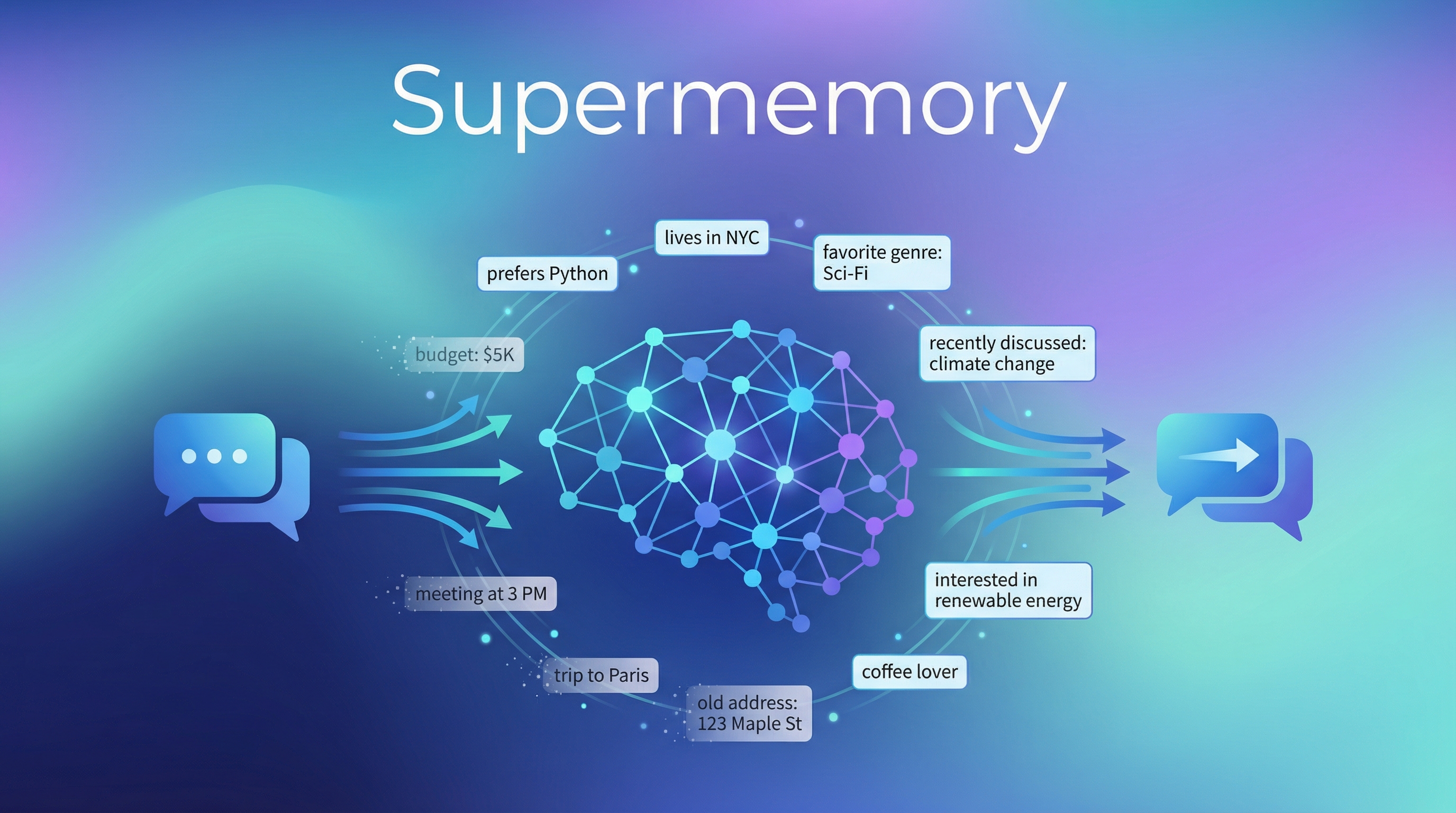

Your AI forgets everything between conversations. Ask it something you told it last week — blank stare. Supermemory fixes this with a unified memory and context API: it extracts facts from conversations, tracks changes over time, auto-forgets expired info, and gives any AI app a persistent, personalized memory layer. Ranked #1 on all three major memory benchmarks (LongMemEval, LoCoMo, ConvoMem).

| *Source: GitHub — supermemoryai/supermemory (21K+ stars) | 亚莱加德 on Douyin | supermemory.ai* |

Why Not Just RAG?

Traditional RAG retrieves the same documents for all users — it doesn’t know who’s asking. Supermemory is different:

Traditional RAG:

User asks → Search docs → Same results for everyone → Answer

Supermemory:

User asks → Search docs + recall user-specific facts → Personalized answer

│

├── "User prefers Python over Java"

├── "User's project uses PostgreSQL"

└── "User said yesterday: budget is $5K"

(supersedes last month's "$10K")

Key difference: Supermemory tracks facts per user over time, handles contradictions (newer info supersedes older), and auto-forgets temporary context.

Core Capabilities

| Feature | What It Does |

|---|---|

| Fact extraction | Automatically pulls facts from conversations — no manual tagging |

| Temporal awareness | Knows that “I moved to NYC” supersedes “I live in SF” from last month |

| Auto-forgetting | Expired info (e.g., “meeting at 3pm today”) is purged automatically |

| User profiles | Auto-maintained context combining stable facts + recent activity (~50ms retrieval) |

| Hybrid search | RAG + memory queries combined — knowledge base docs + personalized context in one call |

| Multi-modal | PDFs, images (OCR), videos (transcription), code (AST-aware chunking) |

| Data connectors | Real-time sync with Google Drive, Gmail, Notion, OneDrive, GitHub |

Quick Start

As MCP Server (Claude Code / Cursor / VS Code)

npx -y install-mcp@latest https://mcp.supermemory.ai/mcp --client claude --oauth=yes

One command — Claude Code gains persistent memory across sessions.

As SDK

npm install supermemory # or: pip install supermemory

import { SuperMemory } from 'supermemory';

const client = new SuperMemory();

// Store a memory

await client.add("User prefers dark mode and uses vim keybindings");

// Retrieve user profile + search

const result = await client.profile({ user_id: "user123", search: "editor preferences" });

Framework Integrations

Drop-in wrappers for: Vercel AI SDK, LangChain, LangGraph, OpenAI Agents SDK, Mastra, Agno, n8n.

Benchmark Results

| Benchmark | What It Tests | Supermemory Score | Rank |

|---|---|---|---|

| LongMemEval | Long-term memory with knowledge updates | 81.6% accuracy | #1 |

| LoCoMo | Fact recall across extended conversations | Top | #1 |

| ConvoMem | Personalization and preference learning | Top | #1 |

The team also open-sourced MemoryBench — a benchmarking framework for comparing memory providers head-to-head.

How It Compares

| Supermemory | Mem0 | Zep | Letta | |

|---|---|---|---|---|

| Approach | Unified memory ontology | Graph-enhanced memory | Temporal knowledge graph | Self-editing memory (OS metaphor) |

| Temporal handling | Auto-supersede + auto-forget | Manual updates | Tracks fact changes over time | Archival store |

| User profiles | Auto-generated, ~50ms | Manual configuration | Built-in | Per-agent state |

| MCP support | Yes (one-command install) | Via community servers | No | No |

| Multi-modal | PDF, images, video, code | Text-focused | Text-focused | Text-focused |

| GitHub stars | 21K+ | 48K+ | 3K+ | 15K+ |

| Best for | Full-stack AI apps with personalization | Chatbots, personal assistants | Enterprise with compliance needs | Agent runtimes with autonomy |

Architecture: One Ontology, Not Five Systems

Most memory solutions require you to configure separate systems: vector DB for search, graph DB for relationships, key-value store for facts, profile builder for users. Supermemory consolidates everything into a single unified memory ontology:

┌─────────────────────────────────────────┐

│ Supermemory Unified Layer │

│ │

│ ┌──────────┐ ┌──────────┐ ┌────────┐│

│ │ Fact │ │ Search │ │Profile ││

│ │Extraction│ │ (hybrid) │ │Builder ││

│ └────┬─────┘ └────┬─────┘ └───┬────┘│

│ │ │ │ │

│ └──────────────┼────────────┘ │

│ ▼ │

│ ┌──────────────────┐ │

│ │ Unified Memory │ │

│ │ Ontology │ │

│ └──────────────────┘ │

│ │ │

│ ┌──────────────┼────────────┐ │

│ ▼ ▼ ▼ │

│ ┌──────────┐ ┌──────────┐ ┌────────┐│

│ │Connectors│ │ Multi- │ │ Auto- ││

│ │(Drive, │ │ modal │ │ forget ││

│ │ Notion) │ │(PDF,img) │ │ ││

│ └──────────┘ └──────────┘ └────────┘│

└─────────────────────────────────────────┘

No separate vector DB to configure. No graph DB to maintain. One system.

How LearnAI Team Could Use This

- Persistent course assistants — Give AI tutors memory across sessions so students don’t re-explain goals, misconceptions, or project context.

- Research project continuity — Store evolving facts about datasets, hypotheses, and results so research agents resume accurately.

- Personalized AI literacy coaching — Track each learner’s preferred tools, skill level, and recurring blockers.

- Faculty support workflows — Maintain context across syllabus design, assignment drafting, rubric iteration, and feedback cycles.

Real-World Use Cases

- Personal AI assistants — Remember user preferences, projects, deadlines, and recent decisions across conversations.

- Customer support agents — Retrieve account-specific history without forcing users to repeat context.

- AI coding agents — Preserve repository conventions, architectural decisions, and developer preferences across sessions.

- Enterprise knowledge apps — Combine shared documents with user-specific context for more relevant search.

- Sales workflows — Track changing customer needs, budgets, stakeholders, and follow-up commitments over time.

Links

- GitHub: supermemoryai/supermemory (21K+ stars)

- Docs: supermemory.ai/docs

- Console: console.supermemory.ai

- MemoryBench: Open-source benchmarking for memory providers

- MCP install:

npx -y install-mcp@latest https://mcp.supermemory.ai/mcp --client claude --oauth=yes