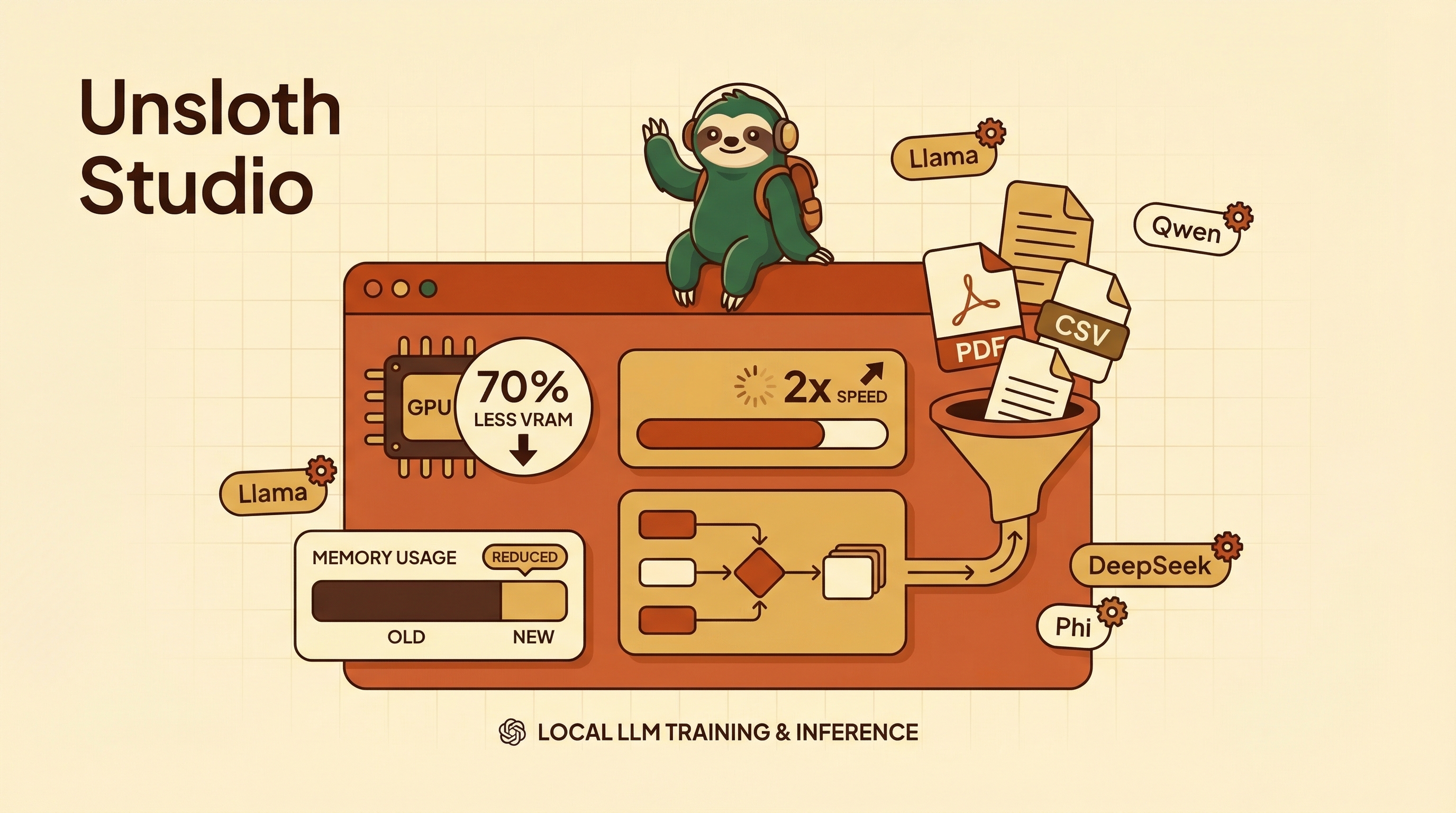

Unsloth Studio is an open-source, no-code Web UI that lets you train, run, and export LLMs from a single local dashboard. The headline numbers: 2x faster training, 70% less VRAM via QLoRA — a 7B model that used to need 24GB VRAM now runs on 16GB. It supports 500+ models (Llama 4, Qwen 3.5, DeepSeek, Gemma), auto-generates training datasets from PDFs/CSVs, and includes GRPO (the reinforcement learning technique behind DeepSeek-R1’s reasoning).

| *Source: GitHub - unslothai/unsloth | Unsloth Website | MarkTechPost | NVIDIA Blog: Fine-Tuning with Unsloth | SitePoint Tutorial* |

What It Does

| Feature | Details |

|---|---|

| Training | Fine-tune 500+ models with LoRA/QLoRA, 2x speed, 70% less VRAM |

| Inference | Run models locally, GGUF support for CPU-only machines |

| Dataset creation | Auto-generate training data from PDF, CSV, DOCX |

| GRPO training | DeepSeek-R1’s RL technique — reasoning via group relative policy optimization |

| Export | GGUF, Safetensors, auto-tuned inference parameters |

| Model comparison | Side-by-side output comparison across models |

| Code execution | Built-in environment to test model outputs |

Hardware Requirements

| Platform | Capability |

|---|---|

| NVIDIA GPU (RTX 30/40/50, DGX) | Full training + inference |

| Apple Silicon Mac | Inference now; MLX training coming soon |

| CPU only (any platform) | Chat inference with GGUF models |

| Windows / Linux / WSL | Full support |

Key: a 7B model fine-tune that previously needed a 24GB GPU now fits in 16GB — that’s a consumer RTX 4070 or equivalent.

Quick Start

# Install

pip install unsloth

# Launch the Studio UI

unsloth studio

Or via Docker — see installation docs.

Why This Matters

Democratization of fine-tuning. Before Unsloth, fine-tuning LLMs required deep ML expertise, expensive GPUs, and complex scripts. Now a no-code UI lets anyone with a consumer GPU create custom models from their own data.

Not just another inference tool. Coverage of Unsloth Studio makes an important distinction: Unsloth isn’t “LM Studio with training.” It’s a unified training + inference + export pipeline. Train a model, test it, export it as GGUF, and deploy — all in one interface.

QLoRA’s real impact. The 70% VRAM reduction isn’t marketing — it’s the practical result of 4-bit quantized LoRA fine-tuning. This means research labs with limited GPU budgets (like university labs) can train models that were previously only accessible to well-funded teams.

How LearnAI Team Could Use This

| Use Case | How |

|---|---|

| AI/ML course project | Students fine-tune a small model on domain-specific data (e.g., security vulnerability descriptions) |

| Understanding fine-tuning | No-code UI lets students focus on concepts (dataset quality, hyperparameters) not infrastructure |

| GRPO experiments | Students can experiment with the same RL technique that powered DeepSeek-R1 |

| Research on a budget | University GPU lab with RTX 3090s can train 7B models — previously impossible |

| Dataset creation exercise | Students prepare PDFs → auto-generate training data → fine-tune → evaluate |

Connection to LAI: Can students who fine-tune their own models develop better intuition for how LLMs work? Unsloth’s no-code approach makes this experiment feasible at scale.

Real-World Use Cases

- Domain-specific assistants — Fine-tune small models on company docs, support tickets, or course material for local deployment.

- Budget research labs — Run LoRA/QLoRA experiments on consumer NVIDIA GPUs instead of renting large cloud clusters.

- Private data workflows — Train and test local models without uploading sensitive datasets to hosted fine-tuning services.