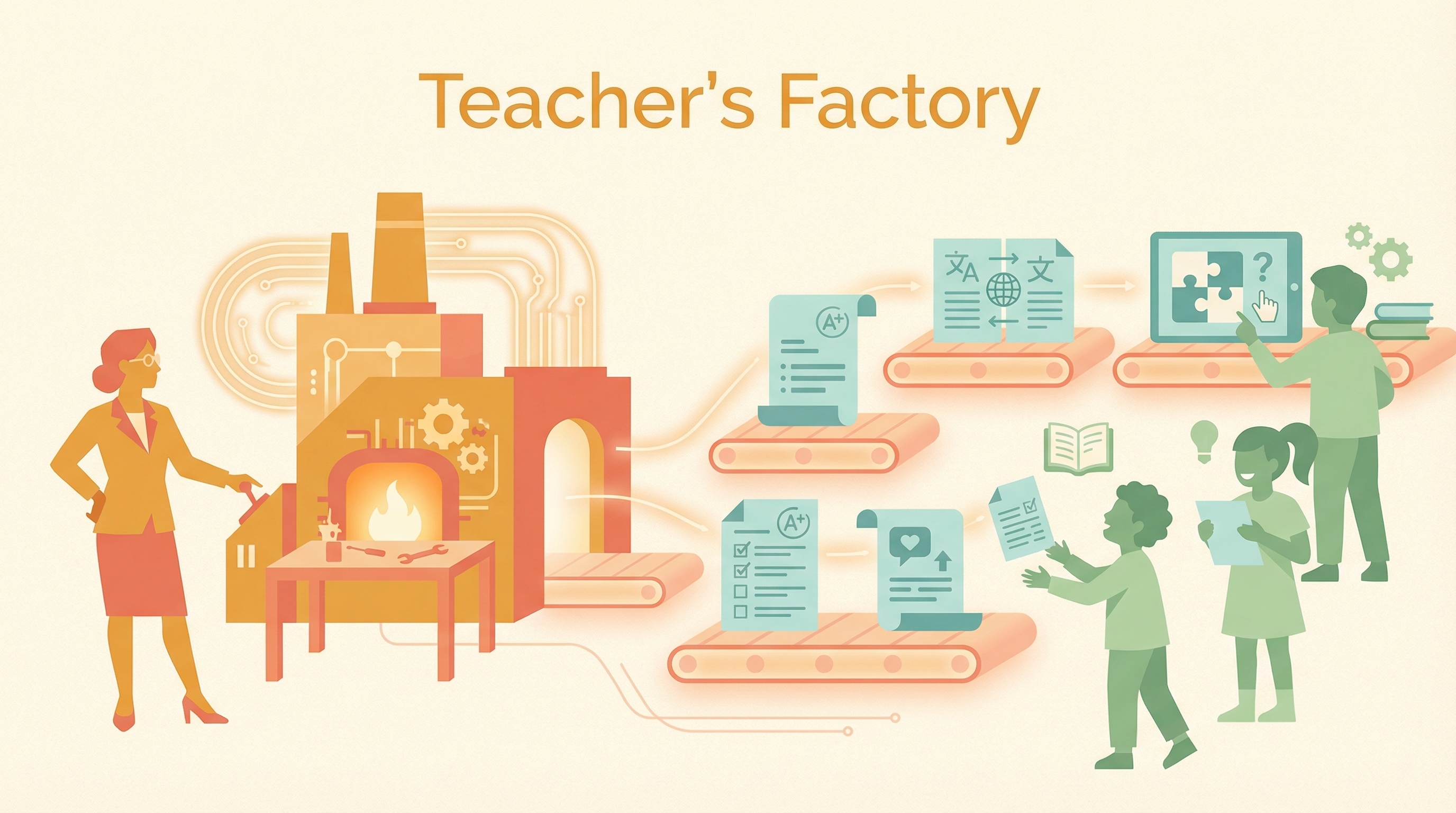

An overlooked truth about AI in education: AI may not be a student’s “cheat tool” — it may be a teacher’s “factory.” One educator used AI to create previously unimaginable, highly customized teaching tools. But the debate keeps focusing on students. The harder question nobody asks: if AI is a supercar already on the track, most teachers are still figuring out how to open the door. Who is training the teachers?

| *Source: Reddit: I’m a Teacher and a Claude Nerd | Anthropic × Teach For All Partnership | Introducing Claude for Education* |

The Uncomfortable Data

A frontline teacher shared a disturbing observation: AI has NOT become students’ “private tutor” — it became a cognitive offloading tool. In his class of 120 students, fewer than 5 actually use AI to learn. The rest use it to avoid learning.

A German CS teacher corroborated this with a sharper framing: AI is splitting students into two groups:

| Group | Size | Behavior |

|---|---|---|

| Learners | ~4% | Use AI to learn everything — it accelerates them |

| Escapers | ~96% | Use AI to escape everything — it regresses them |

This contradicts the popular imagination of “AI as the ultimate personalized tutor.” For most students, it’s just a more advanced answer-search tool — a “cognitive outsourcing” package. They use it to bypass thinking, not to aid thinking.

The Core Argument

The leverage point isn’t the student — it’s the teacher:

| Who Gets the Tool | Outcome |

|---|---|

| Students without judgment | Dependency, regression, “shortcut to capability” that erodes learning |

| Teachers with domain expertise | 10x value — customized materials, instant feedback, personalized curriculum |

One German teacher uses Claude to prep a 45-minute lesson and write “one-time-use” software tools for specific teaching moments. A year ago this was fantasy; now it takes about the same time as regular lesson prep. He uses AI to empower himself, creating highly customized teaching tools that were previously unimaginable.

The tool is the same. The variable is the user’s cognitive depth. This is why the “should students use AI?” debate misses the point. The real question is: how do we make teachers fluent in AI so they can create the learning experiences that students can’t create for themselves?

The Teacher Readiness Gap

AI capability: ████████████████████ (ready)

Student adoption: ██████████████░░░░░░ (already using)

Teacher readiness: ████░░░░░░░░░░░░░░░░ (barely started)

↑

This is the bottleneck

The article’s observation: many educators’ attitude toward new technology is resistance and fear. Some can’t do basic computer operations. AI is a supercar already racing, but most “drivers” are still learning to open the door.

Real-World Use Cases

When teachers — not students — wield AI:

| Use Case | What AI Creates |

|---|---|

| Custom assessments | Rubric-aligned tests targeting specific learning gaps |

| Personalized feedback | Individual comments connecting weak points to course materials |

| Adaptive materials | Multiple difficulty levels of the same concept explanation |

| Translated content | Lectures in students’ native languages |

| Interactive exercises | Custom practice problems with worked solutions |

| Academic integrity | Cross-reference writing styles to detect anomalies |

This is the “factory” metaphor: AI isn’t replacing the teacher — it’s manufacturing teaching materials at scale under the teacher’s direction.

Anthropic’s Answer: Train the Teachers

Anthropic launched a concrete response through three connected programs:

Teach For All Partnership (Jan 2026)

| Program | What It Does | Scale |

|---|---|---|

| AI Fluency Learning Series | 6 live episodes on AI fundamentals + classroom applications | 530+ educators first cohort |

| Claude Connect | Ongoing community for sharing prompts, use cases, discoveries | 1,000+ educators, 60+ countries |

| Claude Lab | Advanced program with free Pro access + direct Anthropic channel | Selected educators |

Reaching 100,000+ teachers across 63 countries, serving 1.5M+ students.

The partnership’s design principle: “For AI to reach its potential to make education more equitable, teachers need to be the ones shaping how it’s used.”

Claude for Education Features

Anthropic specifically built education-focused Claude features:

- Learning mode — guides student thinking through questions instead of giving answers

- Socratic dialogue — develops critical thinking, doesn’t shortcut it

- Campus Ambassador program for students who want to lead AI literacy

The Community Debate

The comments distilled two camps:

| Position | Argument |

|---|---|

| “Tech empowers individuals” | Romantic but naive — tools’ value depends on the user’s cognitive depth |

| “The issue is people, not tech” | For students lacking drive, AI is poison. For teachers with expertise, AI is wings. |

The synthesis: AI is “poison” for shortcuts, but “wings” for innovation — and which one it becomes depends entirely on who’s using it and how they were trained.

How LearnAI Team Could Use This

This framing has direct implications:

- Focus LAI on teacher empowerment, not student automation

- Build the “factory” tools — templates, skills, workflows that teachers can use to create custom materials

- The wiki itself is an example: a teacher (Q) using AI to build a knowledge resource that students can learn from

- Train the trainers first — AI literacy for faculty is more impactful than AI literacy for students